Development Tools

Category for discussing development tools and IDE’s for VoiceXML and voice applications.

-

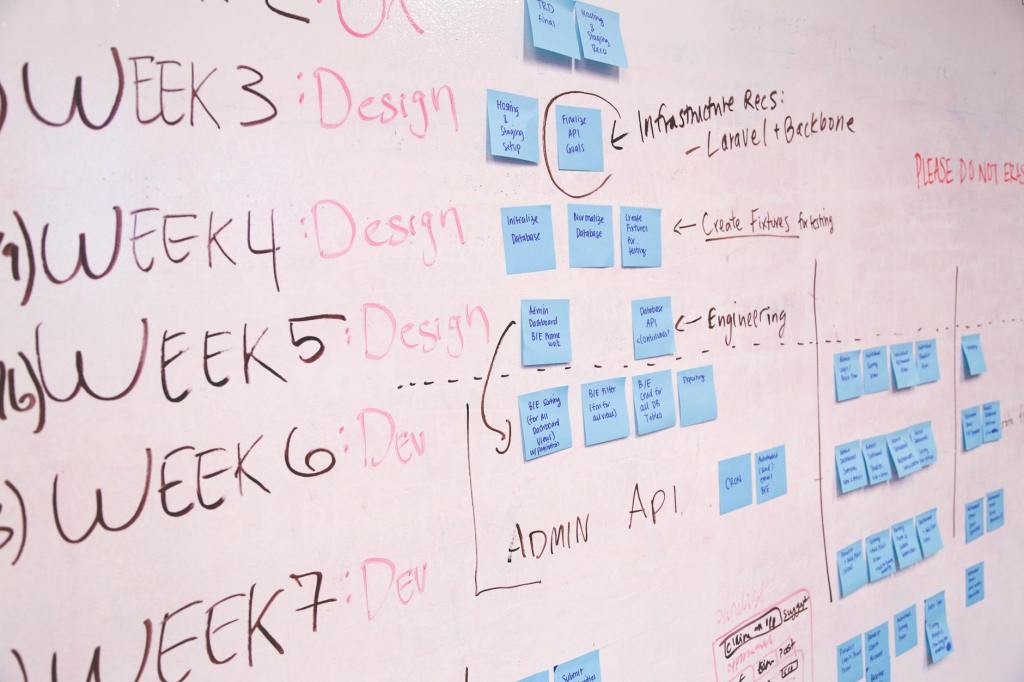

It’s The End of Agile (As We Know It)

AI is changing the way government teams will practice agile. Are agencies ready for the change? Continue reading

-

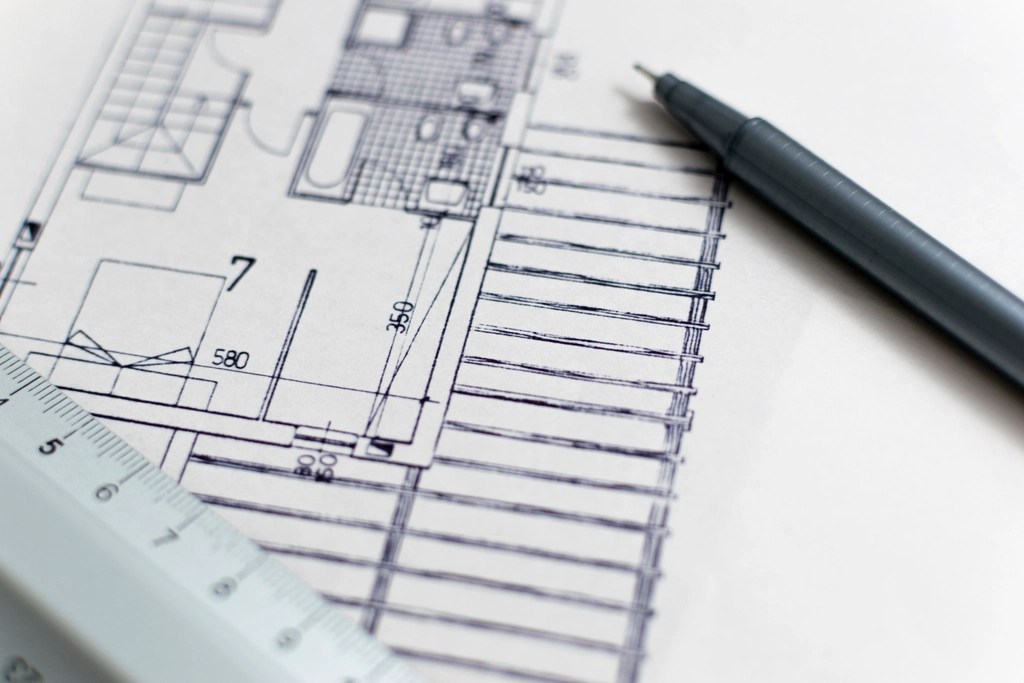

Using AI to Reverse-Engineer a Legacy Application into a Modern Software Specification

In this post, I demonstrate a hands-on example of using AI tools with a legacy technology system to build the foundation for a modern software solution. Continue reading

-

Ending the Sugar Rush

A new study reveals a troubling pattern in open source projects adopting AI coding tools. The fix, it turns out, may lie in an idea as familiar as a CONTRIBUTING.md file — but updated for the AI era. Continue reading

-

From Sharing Code to Sharing Knowledge

For two decades, the vision for government technology has been “build once, share widely.” But as AI changes the economics of software development, the future may look less like sharing code and more like sharing the knowledge that makes building easier. Continue reading

-

The Collapsing Cost of Software Development

The cost of generating software code is collapsing. AI coding tools have matured faster than most realize. For governments, this changes everything. Continue reading

-

Disposable Software and the Future of Government Technology

AI is driving a toward “disposable software” and this could have significant implications for the public sector. The SpecOps method offers one way for governments to adapt to this potential change. Continue reading

-

AI-Powered Automation: Taking ATO Modernization Beyond the Bottleneck

Strategic use of AI tools can support efforts to automate the ATO process and dramatically reduce the time to authorize new technology systems. Continue reading

-

Three Projects I’m Proud of in 2025

In 2025, I built a lot of things to help me understand the changes that are happening and the things we need to focus on going forward to make government work better. Here are three I am most proud of. Continue reading

-

What Does a Good Spec File Look Like?

I’ve been thinking a lot about spec-driven development lately and that got me thinking: what does a good spec file actually look like? How do we know when it’s good? The answer – it depends. Continue reading

-

The Future is Ahead of Schedule

In August, I wrote about just-in-time interfaces as a future vision for civic tech. Three months later, that future seems to be arriving. Continue reading

About Me

I am the former Chief Data Officer for the City of Philadelphia. I also served as Director of Government Relations at Code for America, and as Director of the State of Delaware’s Government Information Center. For about six years, I served in the General Services Administration’s Technology Transformation Services (TTS), and helped pioneer their work with state and local governments. I also led platform evangelism efforts for TTS’ cloud platform, which supports over 30 critical federal agency systems.